Simple, Reliable, Persistent

Operating systems and host software applications often encounter significant challenges when trying to establish how to handle persistent storage if it is located on the system memory bus, as is the case with NVDIMMs. This can involve introducing new semantics for how to distinguish volatile memory from persistent memory in terms of re-initialization to a clean state, or protecting against faults involving stray pointers or a kernel panic.

The answer for operating systems and software applications addressing these challenges is often to create a RAM disk, with the NVDIMM accessed as a block device. However, this introduces the overhead of the kernel block layer and an operating system data path optimized for storage devices that include DMA engines.

Performance

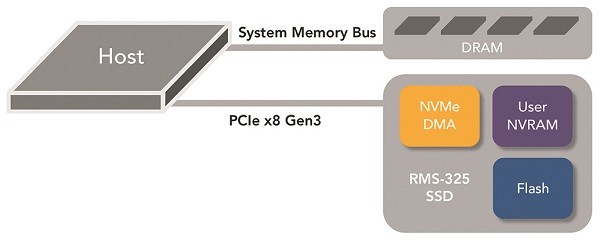

Because NVDIMMs cannot include DMA engines, the additional software overhead incurred from solving the persistent storage challenge can have a tangible impact on system performance. Alternatively, User NVRAM on a PCIe device can be accessed via a high performance DMA engine, in addition to memory mapped Programmed I/O. The DMA engine on the RMS-325 supports the NVMe command set, involving an optimized data transfer queuing system that provides high performance while consuming minimal host CPU resources. The high performance is complimented by the simplicity of an interface that operating systems understand to be persistent, avoiding the introduction of complex new concepts and semantics.

Cost Savings

Many high end Flash SSDs already have capacitor backed NV-DRAM utilized for internal mapping tables and write-caching. The component costs of incrementally expanding this capacity with larger DRAM and capacitors generally costs less in material than adding an entire new NVDIMM and its remotely cabled capacitor pack. But these cost savings are not only based upon DRAM and capacitors.

Flash for Free (almost)

One of the most expensive parts of a NVDIMM BoM is the NAND Flash required for backup. The application requires high bandwidth data transfers to Flash due to limited capacitor power. Ironically, because of this high bandwidth requirement designers often find that more expensive SLC NAND is the cheaper alternative for a NVDIMM. The reason is that meeting the bandwidth requirement with slower MLC Flash takes far more Flash dies, and even the smallest available MLC dies have far more capacity than the application requires. So, for example, an 8GB NVDIMM may require 16GB of SLC for backup, but 128GB of MLC. Despite having a lower $/GB cost, the MLC solution is actually more expensive because of the large minimum capacity configuration required to meet the high bandwidth write transfer requirement.

Alternatively, Flash SSDs typically have dozens or even hundreds of Flash dies, with terabytes of capacity. Reserving a very small portion of capacity across dies enables utilizing less expensive MLC/TLC technology, but without the huge overprovisioning that results from trying to use this memory in a NVDIMM application. Flash SSDs also reduce the capacitor backup requirement as the write transfers are that much faster from being parallelized across so many dies.

No Remote Capacitor Packs or Cabling

Finding suitable repositories for external capacitor is becoming more challenging as densities are increasing with each new chassis generation. Unlike a NVDIMM, no remote capacitor packs or associated cabling are required with NVRAM on a PCIe Flash SSD.

Scalability

NVRAM capacity requirements are often proportional to the backend Flash capacities that they support. It’s challenging to incrementally add new NVDIMMs every time another SSD is added to a system, or replaced by a larger SSD, and Flash densities have been increasing at an unprecedented rate. Upgrading or adding additional modules to DIMM slots can be difficult, but this becomes especially impractical when trying to find new repositories and cabling for the additional capacitor packs and cabling inside an existing chassis.