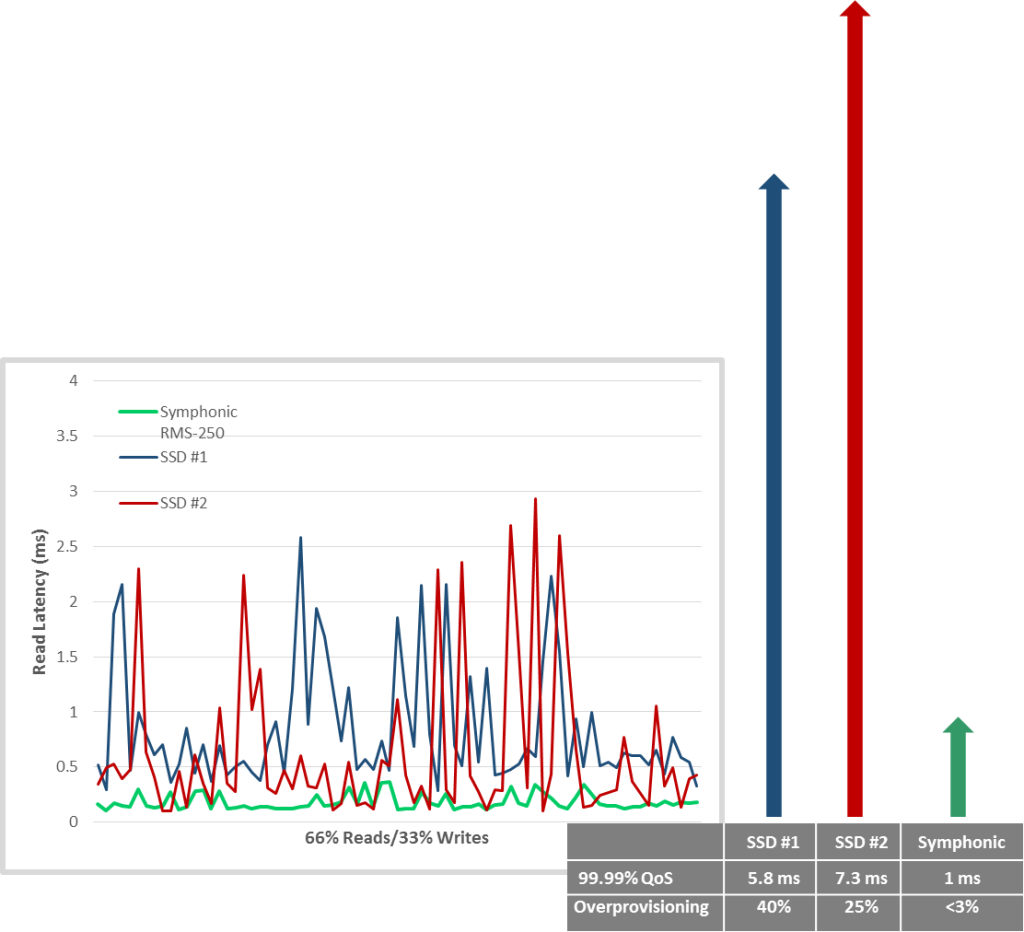

Radian’s benchmarks focus on the most typical data center workloads, mixing read and write operations with random segment offsets. Based upon a version of the widely utilized fio tester, unless otherwise noted Radian’s tests involve:

- 66% random 4K reads

- 33% sequential 16K writes within random 8MB segments

- SSD QD = 128

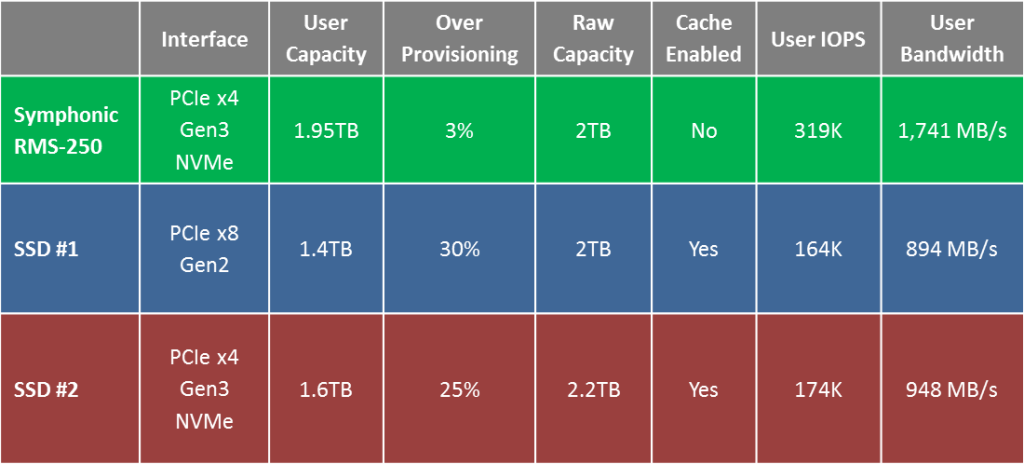

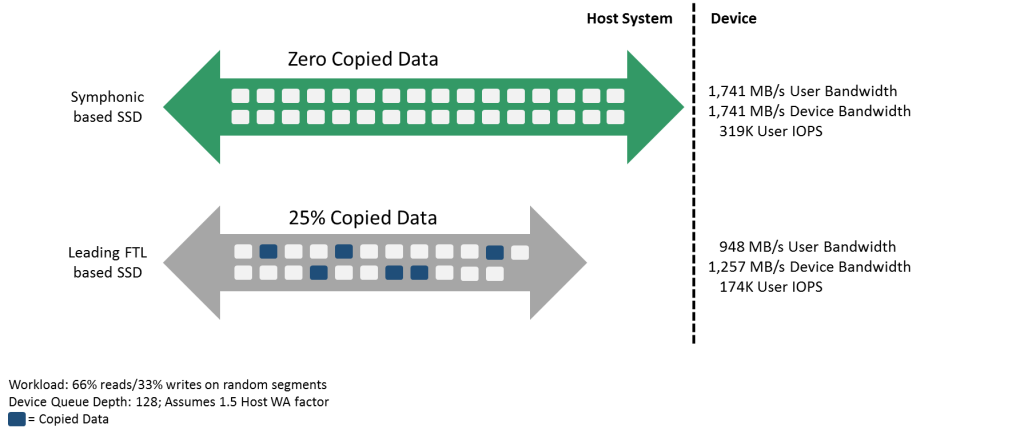

- Because caching is performed much more effectively at the system level, the Radian Symphonic SSD does not utilize read or write caching in any benchmark, and the fio tester itself does not enable caching. However, each of the two FTL SSDs utilized in the comparative tests do enable read/write caching.

- Data center workloads mix read and write operations, most typically in a 2/1 ratio

- Purpose-built systems, distributed file systems, more recent local file systems, and object/key value stores overwhelmingly serialize writes through variants of log structured or append-only approaches

- This widespread approach solves write latency at the system level, but at the expense of read latency

- While serializing writes at some granularity is essential, it turns read requests, even sequential read requests, into random read operations

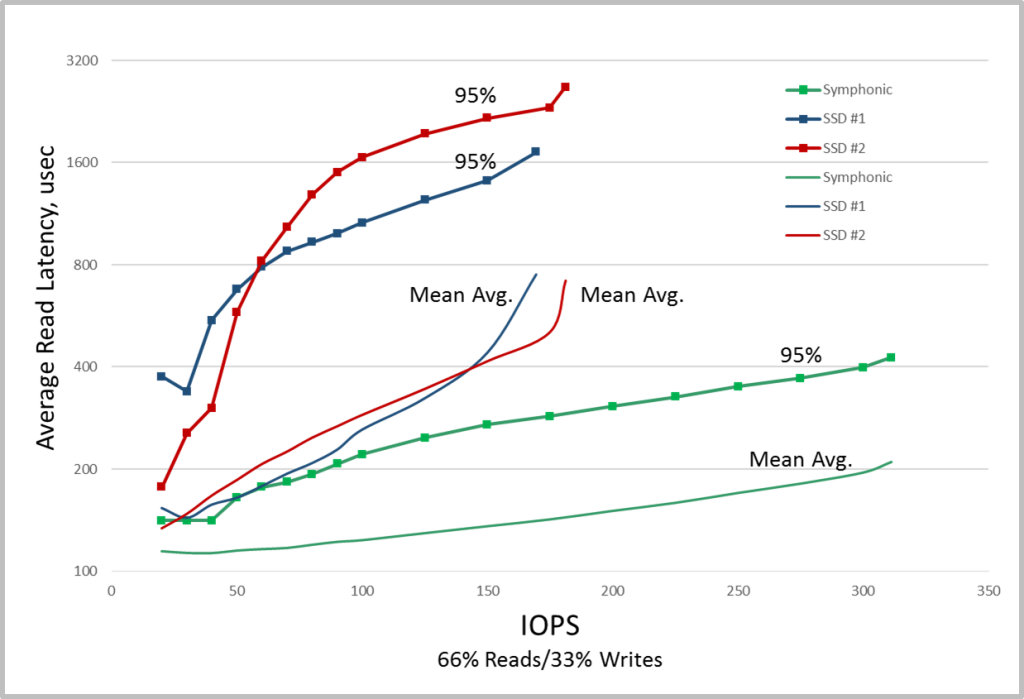

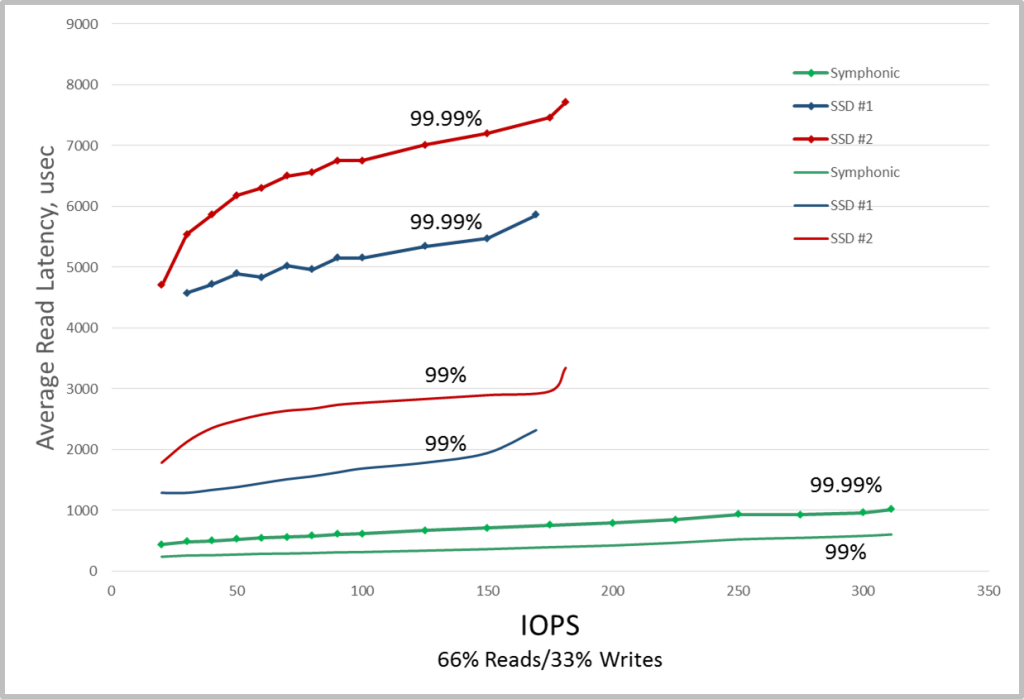

The result is that the latency and QoS for these random read operations will be correlated to bandwidth, with latency spikes increasing in frequency and magnitude as total bandwidth and IOPS increase.

This combination of operations is challenging for Flash, but especially challenging for FTL SSDs. The only option for FTLs to mitigate these spikes is to overprovision more raw Flash capacity, increasing costs to the user.

This combination of operations is challenging for Flash, but especially challenging for FTL SSDs. The only option for FTLs to mitigate these spikes is to overprovision more raw Flash capacity, increasing costs to the user.

Scaling IOPS with deterministic latency