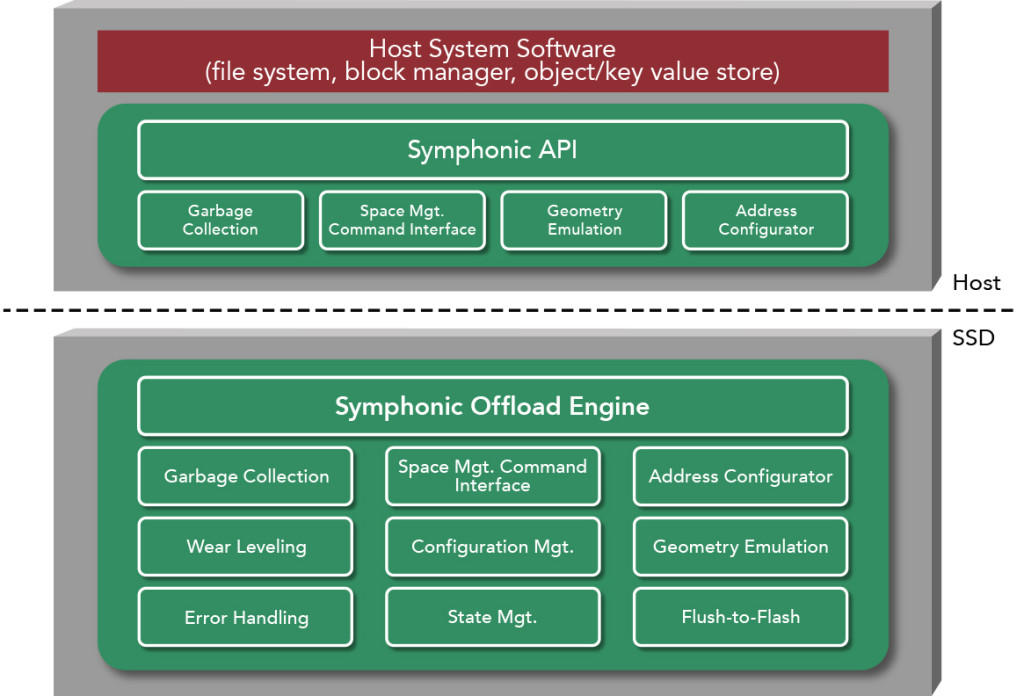

Symphonic is a combination of host-side software libraries, an API and SSD firmware that enables system software to cooperatively perform Flash management processes to realize the full potential of Flash storage.

The Symphonic functionality includes configurable address mapping, geometry emulation, garbage collection, wear leveling, and reliability features. Replacing the SSD FTL, Symphonic turns the SSD into an offload engine while operating in host address space and providing the functionality of a data center class product.

Symphonic’s Address Configurator and Geometry Emulation reside in SSD firmware and host libraries. The Address Configurator enables existing host stacks to select the optimal balance between opposing performance and efficiency objectives. Combined with Symphonic’s Geometry Emulation, the Address Configurator abstracts NAND constraints and the array’s topology while maintaining symmetric alignment with the physical memory array.

The Address Configurator also enables the existing host segment cleaner to control and schedule Flash garbage collection processes.

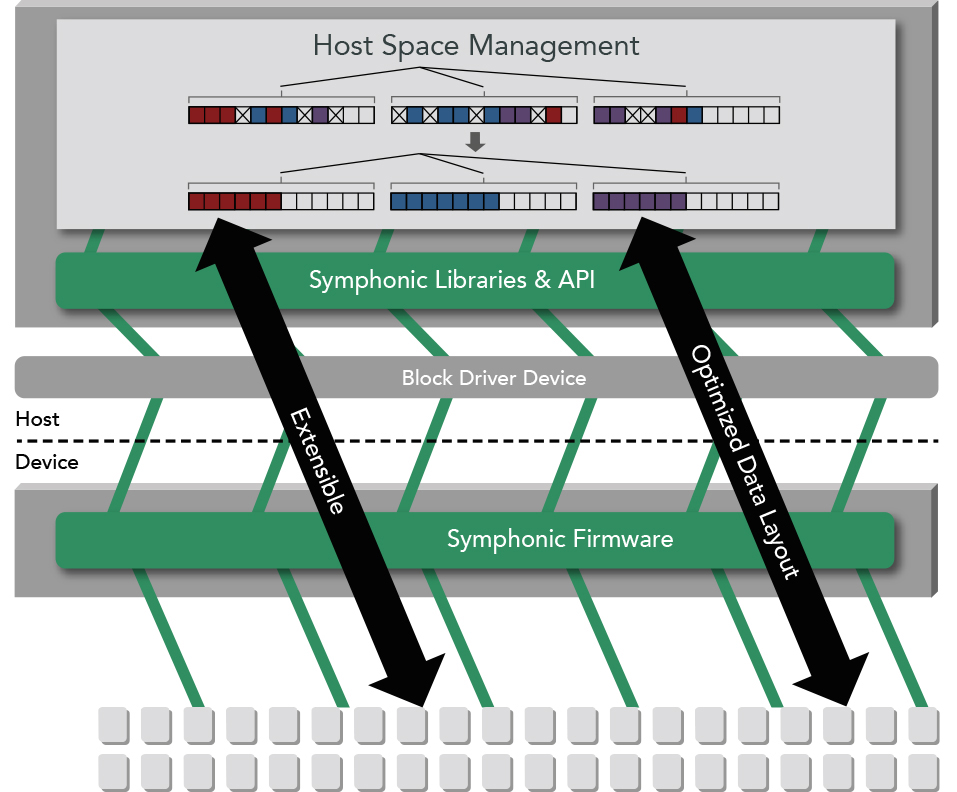

This mechanism enables new, more optimized cleaning policies that cannot be obtained utilizing FTL SSDs. And while the topology of the physical array is abstracted, the host’s optimizations around data layout are carried through to placement on the physical media.

The result is a solution that minimizes host modifications and hardware dependencies, while enabling control over scheduling and data layout to minimize latency spikes and write amplification, and achieve maximum parallelization.

The Symphonic firmware is a collection of integrated state machines, mapping and translation tables, and counters explicitly designed to provide CFM. Residing on the SSD’s embedded processor, the firmware replaces the FTL and performs on-device garbage collection, wear leveling, and bad block management.

Cooperative Garbage Collection

Cooperative garbage collection is provided by firmware state machines integrated with mapping and translation tables. Statistical counters track valid/release states, with the garbage collection engine monitoring event time stamps and utilizing heuristic analysis to generate fine grain range fragmentation and qualitative metrics. Resulting metadata is consumed by embedded flash management processes in addition to being formatted and exported to host resident libraries, with support for event trigger notifications.

The garbage collection engine can perform copy/move/erase operations entirely on the SSD, under the control of host initiated commands and while operating in host address space. Other modules in the garbage collection engine provide configurable threshold parameters, supporting asynchronous event notifications, queries and alerts.

Wear Leveling

Wear leveling and bad block management are handled in a firmware engine that tracks a range of variables accounting for factors such as program/erase cycles, program times, corrected bit errors, retries and disturbs. By default, the wear management functionality is integrated with the garbage collection engine and performed transparently to the host system’s segment cleaning process. An optional mode is available to support host-based wear leveling, with each mode implemented in a manner that enables vendor-supported warranties.

Designed around ACID principles, Symphonic supports RAS (Reliability, Availability, and Serviceability) requirements essential for data center products. This involves fault tolerant maintenance of data and metadata, and capabilities to support treating the SSD as a Field Replacement Unit (FRU).

Geometry Emulation

In exporting the geometry of the device, the Symphonic firmware employs geometry emulation in cooperation with Symphonic host libraries to virtualize the topology of the NAND array. The firmware also transparently handles and abstracts various NAND properties and programming requirements, including geometry and vendor-specific attributes. This functionality enables Forward Compatibility to support different NAND devices.

The Symphonic host libraries and API provide extensible access and management, interfacing into the existing functionality of the target host stack to enable cooperative control over the processes executed by the SSD.

Based on standard interfaces with vendor specific extensions, the API and device driver coordinate paths from the host, through the OS, to the Symphonic SSD firmware.

A single layer at the system-level can efficiently span multiple SSDs, avoiding duplication and synchronization of overlapping functionality. This extensible approach provides control and symmetry from the host stack down through the device.

Host intelligence and functionality, such as data locality or TRIM (release), become implicit processes coherent throughout the system.

The Symphonic host libraries and API provide:

- Interface and mechanisms to the host space management system

- Data accessible via logical block addresses (LBAs)

- Supports 4K or greater read and write accesses

- Interface for configurable address mapping, layout, and striping with tunable parameters

- Interface for Geometry Emulation that virtualizes physical topology of NAND array while providing symmetric alignment

- Simple and efficient access to formatted metadata generated by Symphonic device firmware

- Commands to control and schedule garbage collection processes

- Optional mode to support host-based wear leveling utilizing metrics generated by Symphonic device firmware

- Mechanisms for setting thresholds, queries, alerts, and event notifications

API & Device Driver

A Symphonic SSD is treated as a block device utilizing an API based on the industry standard NVMe command set, and a design intended to also support SAS interfaces. Initially targeted at the Linux OS, no modifications are required to the mainline kernel and the standard

NVMe device driver can be utilized to support most functionality. A modified Symphonic device driver is also available to support specific diagnostic utilities and optional advanced functionality.

Symphonic API:

- Based on the NVMe command set and utilizes the vendor specific management and I/O extensions

- Designed to accommodate SAS (SCSI protocol) utilizing vendor specific extensions

- Portable to different OS environments to support host stacks ranging from block virtualization to file systems and object/key value stores

- SSDs appear as block devices

- Concurrently supports multiple block devices