NV-RAM on NVMe

Post 1

NV-RAM vs. 3D XPoint

Metrics that Matter

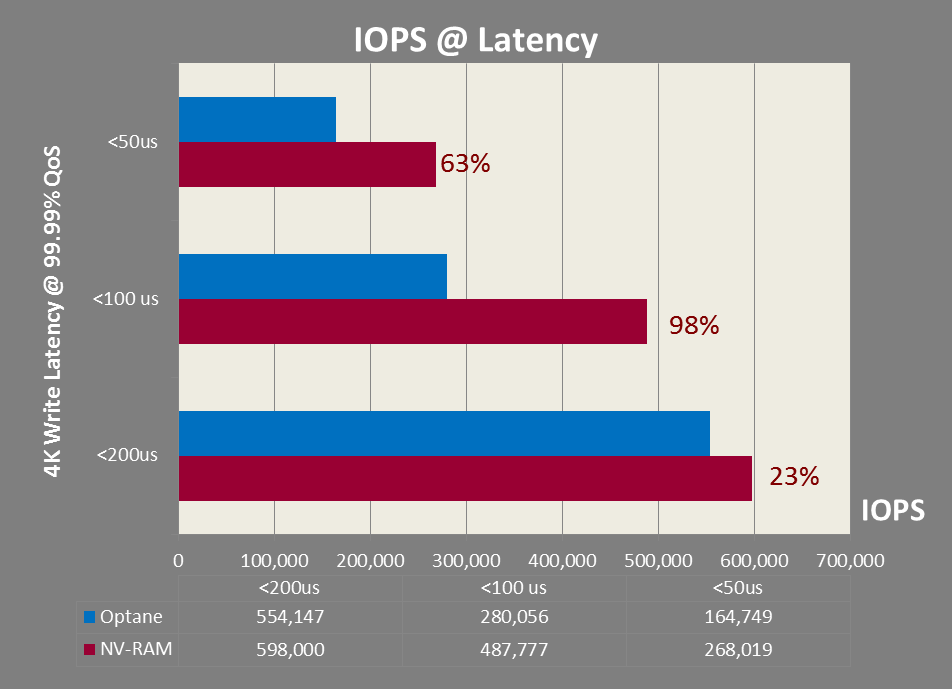

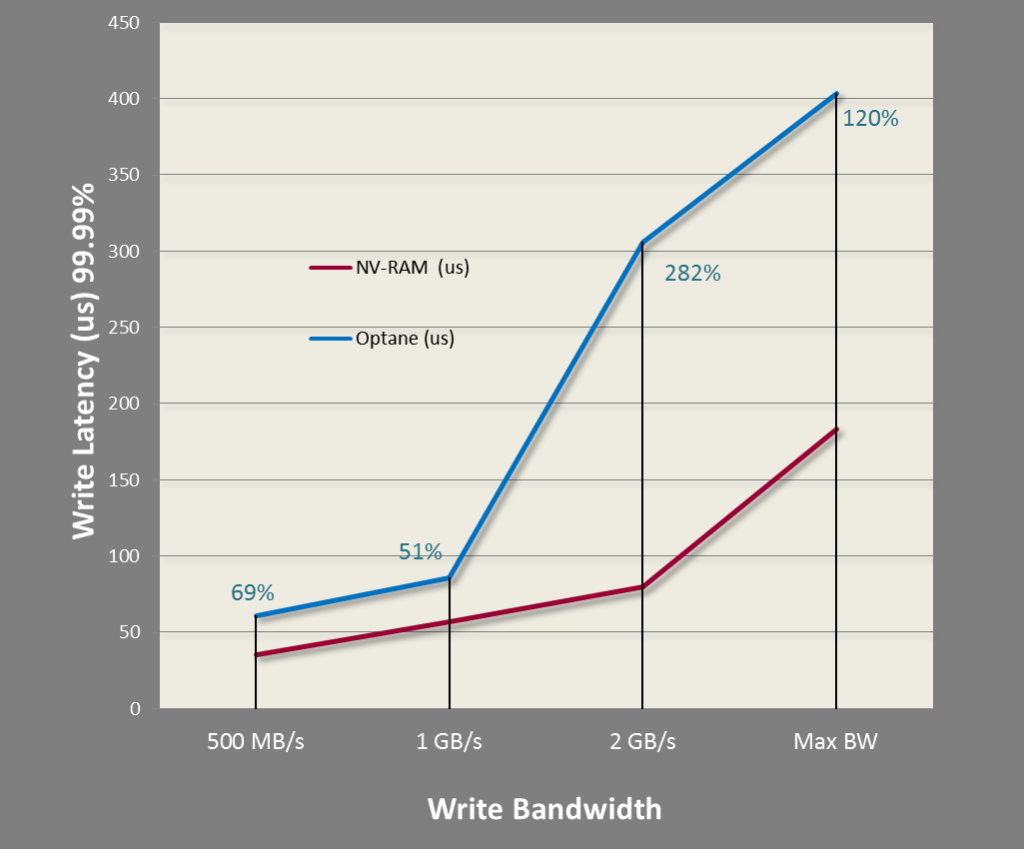

While bandwidth, IOPS and tail latency are each important, evaluating them in isolation is often of limited value. What matters most to data center applications are metrics like IOPS @ Latency, or Latency @ Bandwidth.

In this test, we can imagine an application that has a maximum acceptable latency threshold, e.g., under <50us, <100 us, or < 200us, and is seeking the highest IOPS possible at that latency threshold: IOPS @ Latency. The NV-RAM drive achieves 63% higher IOPS at <50us, 98% more at <100us, and 23% more at <200 us.

We believe this final test is the most representative of how storage system designers evaluate performance of potential NV-RAM products. They typically start with a specific random write bandwidth requirement, then seek to find the device that provides the best write latency QoS at that bandwidth, i.e., Latency @ Bandwidth. At this 99.99% QoS level, the Optane latencies are 51% to 282% higher than the NV-RAM drive at the specified write bandwidth.

Endurance Requirement

In addition to requiring non-volatile storage, these logging/caching applications often involve near continuous write data rates, at least 1 GB/s to 2 GB/s, which can pose an impossible obstacle for certain types of Storage Class Memory (SCM), such as 3D X-Point.

The simple math tells us that data rates of 1GB/s to a device with 8GB of storage capacity will require over 10,000 Drive Writes Per Day (DWPD), and at 2 GB/s it will require over 20,000 DWPD. Based on DDR4 memory, the Radian/Viking NV-RAM drive has no problems meeting these requirements. Alternatively, the data center class Optane drive delivers 30 DWPD for 5 years.

But what if we massively overprovisioned, by almost 50x. Continuing with a hypothetical requirement for 8GB of write log capacity, dedicating an entire Optane 375GB drive to the application would effectively be overprovisioning by 47x. Even with all of this overprovisioning, the 1 GB/s sustained write bandwidth would still require 230 DWPD for the Optane drive, and 2 GB/s bandwidth would require 460 DWPD for the Optane drive, well beyond its 30 DWPD.

Summary

In general, we were impressed by Optane’s performance, primarily around IOPS. NV-RAM has a meaningful advantage over Optane with respect to tail latencies, and providing bandwidth or IOPS at data center QoS latencies. For the most common NVMe NV-RAM applications, those that involve write ahead logging and caching, we do not believe Optane is a viable alternative due to its inability to meet the endurance requirements for these applications. And in fairness to Intel, they have not been marketing Optane as a solution for these applications.

What’s coming next…

Thanks for reading through the post and we hope you found it insightful. Check back with us in the weeks ahead to see our follow on posts with results from testing the NV-RAM drive with byte addressable mmap accesses, i.e., via the Persistent Memory Region (PMR), with the Linux kernel. That will be followed by some interesting results from testing with SPDK, including NV-RAM in SPDK, Optane in SPDK, and how the same tests compare using the linux kernel. They even surprised us.

NV-RAM on NVMe Post 1

Page 3 of 3